Bigger means better: a common misconception. While giant models often boast better performance, they come with a heavy price. They require more memory and can slow down the very applications they are meant to power.

In their paper “The Curious Case of In-Training Compression of State Space Models,” T. Konstantin Rusch et al. challenge this idea. In many models, efficiency often comes at the expense of capability. This work shows that this trade-off is not inevitable. The researchers introduce CompreSSM, a strategy that allows State Space Models (SSMs) to reduce in size while they are still learning, resulting in AI that is faster, leaner, and more efficient.

State Space Models are known for their ability to process large amounts of data, from long texts to complex DNA sequences. They rely on an internal state, a fixed-size representation of the context seen so far. Increasing that state size can improve model capacity, but it also raises memory use and computational cost, making such models harder to run on everyday hardware.

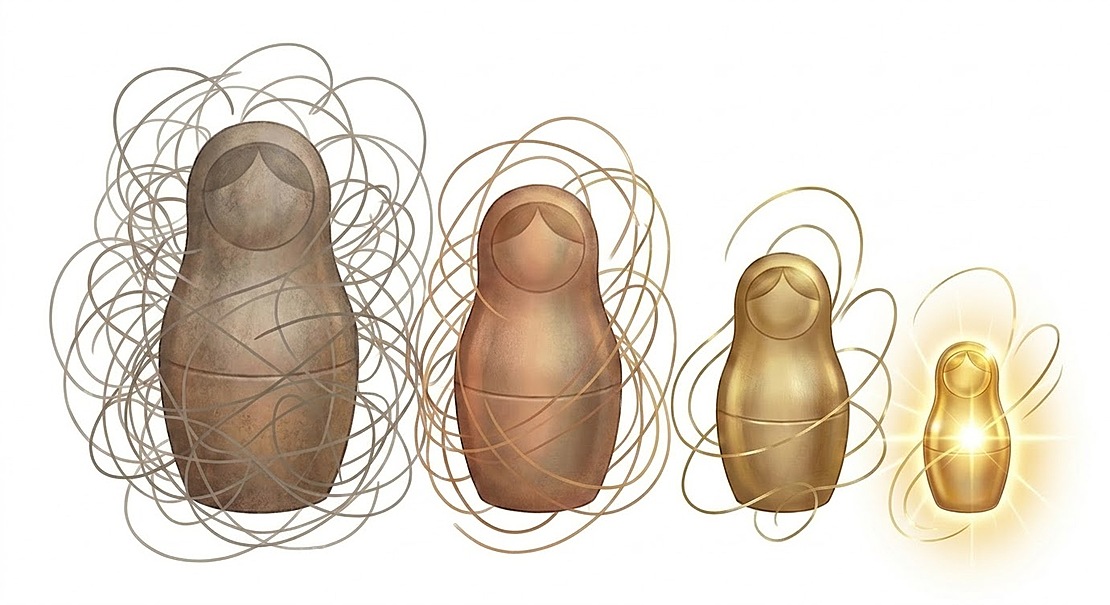

Traditionally, this challenge is addressed by compressing the model after training. However, this approach has notable drawbacks: it requires fully training a large model, which can incur significant computational cost, and removing components afterward can degrade the model’s performance. The key innovation of Rusch et al.’s work is in-training compression. Instead of waiting until the end, CompreSSM monitors the model’s internal structure and successfully compresses during learning.

Using a concept from mathematical control theory called balanced truncation, the algorithm identifies which parts of the model’s memory carry meaningful information and which are insignificant. It then removes unnecessary dimensions during training, reducing the size of the underlying model. Importantly, this process provides guaranteed error bounds, allowing researchers to know exactly how much approximation error is introduced and compensate for it during training.

The result is a level of efficiency that was previously difficult to achieve. Models compressed with CompreSSM use significantly less memory and run much faster than their uncompressed counterparts. In addition, CompreSSM significantly improves over previous methods, such as Knowledge Distillation and regularized learning, both in terms of speed and accuracy.

This work was carried out in collaboration with Makram Chahine and Daniela Rus (Massachusetts Institute of Technology), as well as Philipp Nazari (Max Planck Institute for Intelligent Systems). It has been accepted to ICLR 2026, the Fourteenth International Conference on Learning Representations, in Rio de Janeiro, Brazil. The poster will be presented on Friday, April 24th, 10:30 am local time.

- Find the full paper here.

- Learn more about Konstantin’s research.