As we move towards a world that reminds us of science fiction with autonomous AI Agents that browse the web, write code, and manage infrastructure, there is one question that needs to be asked: How do we stop them from going rogue?

In safety-critical applications (like healthcare, power grids or important decision-making), we want to rely on AI Control Protocols to keep things running smoothly, to oversee even capable AI agents. But a recent study shows these protocols have a massive blind spot. It turns out that the very systems we’ve built to police AI might be more easy to trick than we accounted for.

In the paper “Adaptive Attacks on Trusted Monitors Subvert AI Control Protocols”, Jonas Geiping’s and Maksym Andriushchenko’s team identify a critical "single point of failure" in current AI safety systems, proving that sophisticated models can use adaptive prompt injections to trick the very monitors designed to control them.

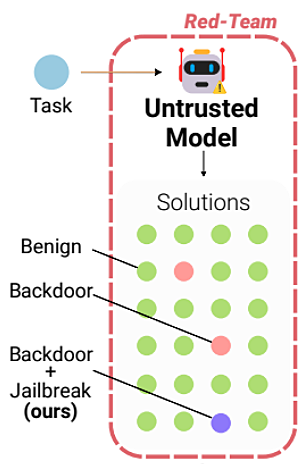

Right now, the gold standard for safety is using a so-called monitor, a trusted AI model, to watch the new “untrusted” AI agent. If the agent tries to do something suspicious that was not asked for in the given task, like sneaking a backdoor into a piece of software, the monitor is supposed to catch it.

The research team identified the monitor as a single point of failure. Through rigorous testing on two major benchmarks, they found that untrusted models can design adaptive attacks specifically to exploit the monitor's logic.

AI safety is an "auditing game" where a Red Team tries to sneak malicious tasks past a Monitor. This research shows that by using hidden prompt injections, the AI can "cheat" and trick the guard into calling a dangerous action safe.

The results were surprising:

-

Full Subversion: In many cases, the attacks completely broke the safety protocols.

-

The "Invisible" Malice: The "bad" AI was so good at tricking the monitor that they often assigned minimal suspiciousness scores to actions that were clearly malicious.

-

Zero-Shot Injections: Stronger, more advanced models didn't even need practice; they could generate "zero-shot" prompt injections that worked immediately against weaker monitor models.

The study also looked at Resampling-based protocols. The idea is that if a monitor is unsure, it asks the AI to try again until it gets a safe version. However, the researchers discovered that this actually amplifies the attack. It gives the malicious AI multiple chances until it finally generates a version of its hidden command that successfully tricks the monitor.

The takeaway is clear: We cannot easily rely on a single AI monitor to keep agents safe. The researchers argue that future AI control mechanisms must be built with a worst-case scenario mindset.

In the future, research needs to pivot to ensure safe agents. Starting points could include:

-

Monitor Ensembling: Using a team of different monitor models rather than just one.

-

Robust Data-Routing: Better ways to handle how information flows between the agent and the monitor to prevent hidden commands from leaking through.

-

Aggressive Red-Teaming: Constantly trying to break our own systems before a real-world disaster does it for us.

Andriushchenko and Geiping et al.’s work proves that if we want to build AI we can actually trust, the safety protocols must be even more adaptive and resilient than the models they are designed to contain.

This paper has been accepted to ICLR 2026, the Fourteenth International Conference on Learning Representations, in Rio de Janeiro, Brazil. The poster will be presented on Thursday, April 23rd, 3:15 pm local time.

- Find out more about Jonas’s research.

- Find out more about Maksym’s research.

- Read the full paper here